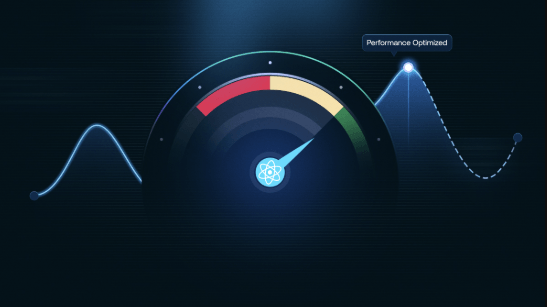

Performance optimization tools form a data-driven ecosystem that measures resource usage, flags bottlenecks, and guides decisions at scale. They emphasize objective profiling, repeatable tracing, and disciplined experimentation to minimize intrusion. By automating tuning and enabling deterministic experiments, they provide traceable outcomes across environments. The framework surfaces gaps, compares tradeoffs, and balances flexibility with maintainability. A clear decision path emerges, but the implications and boundaries invite further scrutiny as systems evolve.

What Performance Optimization Tools Do (Foundational Overview)

Performance optimization tools are software and methodologies that systematically identify, measure, and improve system efficiency. They quantify resource usage, flag bottlenecks, and guide implementation decisions at scale. By enforcing dependency budgeting and evaluating memory locality, teams align workloads with capacity. The approach is data-driven, repeatable, and scalable, supporting freedom-driven experimentation while maintaining disciplined, objective assessments of performance improvements.

How to Profile and Trace Gold-Standard Workloads

Profiling and tracing gold-standard workloads requires a disciplined, data-driven approach that builds on the profiling fundamentals described earlier.

The article articulates repeatable profiling strategies and effective tracing methodologies, enabling scalable insight into system behavior.

It emphasizes objective measurement, minimal intrusion, and reproducible workflows, empowering teams to compare workloads, diagnose bottlenecks, and guide disciplined investments without sacrificing autonomy or freedom in architectural choices.

Tuning and Automation: Turning Data Into Faster Apps

Data-driven optimization combines systematic measurement, automated workflows, and repeatable experiments to yield tangible speedups without compromising stability.

Tuning automation emerges as a disciplined, scalable practice: lightweight feedback loops, continuous refinement, and deterministic results.

Data tracing supports traceable decisions, preserving context across environments.

The approach favors freedom by reducing guesswork, enabling rapid iteration, and aligning performance gains with evolving application needs.

Choosing the Right Toolset: Criteria, Comparisons, and Tradeoffs

Choosing the right toolset hinges on clearly defined criteria, objective comparisons, and transparent tradeoffs across environments.

The analysis emphasizes tool selection, tradeoff analysis, and profile impact to quantify performance gains.

It highlights automation gaps and runtime overhead, ensuring scalability.

Careful evaluation supports tool integration decisions that balance flexibility, maintainability, and freedom-driven deployment without overengineering.

Frequently Asked Questions

How Do I Measure Real-World User Impact Beyond Benchmarks?

Real-world impact is measured by user-centric metrics collected continuously, triangulated across cohorts, sessions, and tasks; the method is data-driven, scalable, and transparent, enabling freedom through observable outcomes rather than isolated benchmarks.

What Are Hidden Costs of Adopting Performance Tools?

A hypothetical retailer observed hidden costs from tool adoption: integration friction, data governance overhead, and ongoing license burdens. Hidden costs accumulate despite benchmarks, demanding governance, training, and scalability planning to sustain long-term value within a data-driven, freedom-oriented framework.

Can Tools Impede Developer Productivity During Adoption?

Tools adoption can impede developer productivity, as initial learning curves and integration friction generate developer friction before benefits accrue. Data-driven monitoring shows short-term slowdowns, while scalable adoption strategies reduce friction and reveal long-term gains for autonomous teams.

How Do Tools Scale With Multi-Tenant and Cloud Ecosystems?

Answering the current question: Tools scale with multi-tenant and cloud ecosystems by addressing scaling challenges through modular architectures, observable metrics, and automated provisioning, while aligning cloud native considerations with governance, security, and performance SLAs for flexible, data-driven growth.

See also: mytechstock

What Governance Ensures Fair Tool Usage Across Teams?

A lighthouse guides equitable supervision: governance ensures fair tool usage across teams. Governance fairness and tool usage policies enable data-driven, scalable control, while preserving freedom to innovate, with transparent metrics, audits, and standardized escalation procedures across multi-tenant environments.

Conclusion

Performance optimization tools deliver measurable, repeatable improvements through objective instrumentation, disciplined tracing, and automated experimentation. By quantifying resource usage and surface bottlenecks, they enable scalable, data-driven decision making across environments. For example, a hypothetical e-commerce platform reduces latency 32% after automated profiling and tuned memory locality. The approach emphasizes traceable experiments, deterministic runs, and transparent tradeoffs, ensuring maintainable, deployable improvements that balance speed, cost, and reliability in production systems.